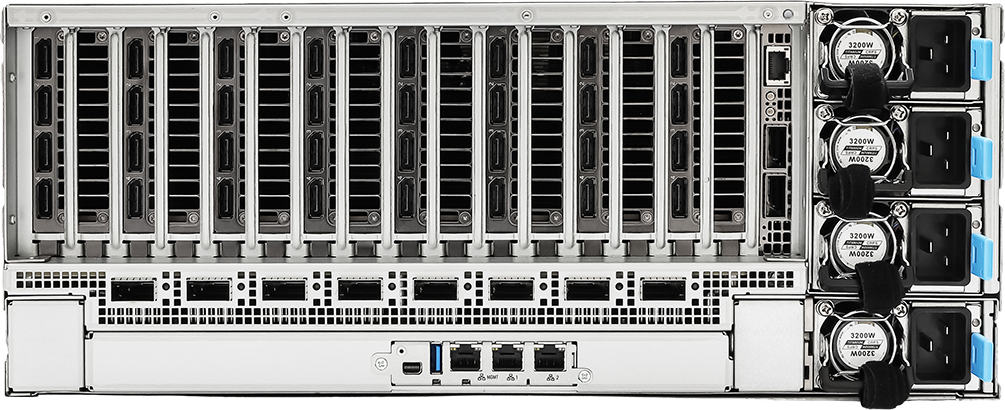

TITAN GM640R-G7

AI & GPU Server

- 4U NVIDIA MGX™

- Dual Intel® Xeon® 6700P-series, 6500P-series, and 6700E-series Processors

- Supports 8 dual-slot GPUs with 600W TDP each

- Up to 4TB DDR5-6400 MT/s memory

- 16 x Hot-swap E1.S (PCIe5.0 x4) drive bays and 2 x M.2

- ASPEED AST2600 management

- 3200W (3+1) 80 PLUS Titanium Power Supply

- Perfect for AI Training, AI Inference, Data Analytics and more

Product Overview

The CIARA TITAN GM640R-G7 is a 4U NVIDIA MGX™ server designed for advanced AI training, large-scale inference, and distributed computing. Powered by dual Intel® Xeon® 6th Gen processors (up to 350 W each) and built on NVIDIA’s GPU-optimized MGX� architecture, it delivers consistent cooling and sustained performance for up to eight NVIDIA GPUs (600 W TDP each), including RTX™ 6000, H200 NVL, H100 NVL, and L40s.

With support for up to 4 TB of DDR5 memory, eight 400Gb/s QSFP112 ports, E1.S PCIe Gen5 storage, and PCIe 5.0 expansion, the system delivers high-speed networking and bandwidth for supporting AI clusters and future GPU-intensive workloads.

Key Features and Benefits

NVIDIA MGX™ GPU-Optimized Architecture

The CIARA TITAN GM640R-G7 is built on NVIDIA’s MGX™ modular platform, which features standardized slot spacing, airflow paths, and power delivery. This design provides consistent cooling, sustained performance, and support for future high-power GPU generations.

High-Performance Intel Compute

The platform’s dual Intel® Xeon® 6th Gen processors (up to 350 W each) provides strong multi-core performance for AI inference, data processing, and HPC workloads. Intel AMX and DL Boost technologies accelerate CPU-based AI tasks and efficiently distribute data to the GPUs.

Multi-GPU Acceleration for Demanding AI Workloads

The system supports up to eight NVIDIA GPUs, including the RTX™ 6000, H200 NVL, H100 NVL, and L40s. It delivers the computing power necessary for deep learning training, generative AI, simulations, and large-scale inference workloads.

High-Speed Networking for AI Clusters

Thanks to its eight 400 Gb/s QSFP112 ports powered by NVIDIA ConnectX®-8 technology, the system provides an extremely high level of network throughput for distributed training, multi-node inference, and large-scale data movement. This level of networking is essential for building and scaling modern AI clusters.

Perfect For

AI Training

AI Inference

Simulation

Research

Tech Specs

Resources

Product Downloads

Service & Support

Related Products

We Have Your Back

Deploy AI & GPU Servers